Introduction to Google Gemini 2.0

Google has recently released Gemini 2.0, a large language model that is being compared to other models like DeepSeek R1 and OpenAI o3-mini. In this article, we will take a closer look at Gemini 2.0 and explore its features and capabilities.

Initial Reactions to Gemini 2.0

Initial reactions to Gemini 2.0 were mixed, with some people expressing skepticism about its abilities

Google has taken a lot of criticism in recent years, but the release of Gemini 2.0 is being seen as a significant win for the company.

Initial reactions to Gemini 2.0 were mixed, with some people expressing skepticism about its abilities

Google has taken a lot of criticism in recent years, but the release of Gemini 2.0 is being seen as a significant win for the company.

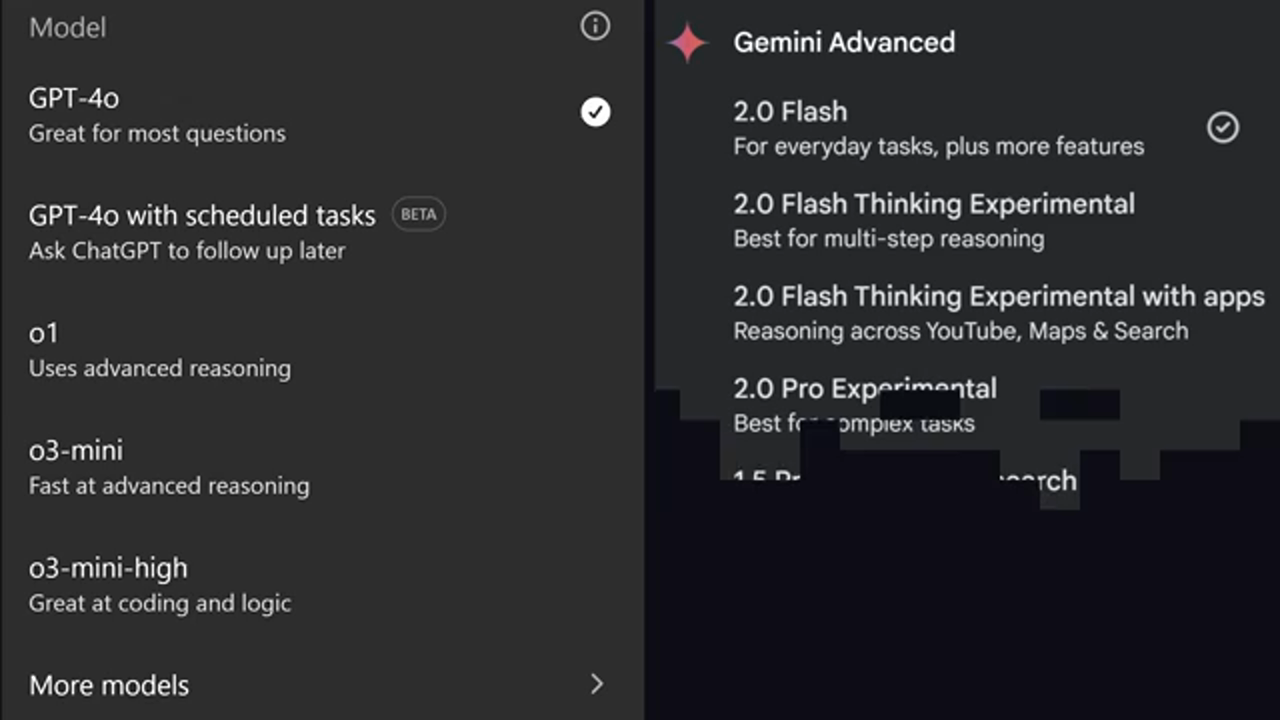

Comparison to Other Models

Gemini 2.0 is being compared to other models like DeepSeek R1 and OpenAI o3-mini

Gemini 2.0 comes in a variety of flavors, including a light model and a pro model. The pro model is more expensive, but it has a larger context window and can handle more complex tasks.

Gemini 2.0 is being compared to other models like DeepSeek R1 and OpenAI o3-mini

Gemini 2.0 comes in a variety of flavors, including a light model and a pro model. The pro model is more expensive, but it has a larger context window and can handle more complex tasks.

Real-World Use Cases

Gemini 2.0 can be used for a variety of real-world tasks, including PDF processing and chatbots

One of the most impressive features of Gemini 2.0 is its ability to process large amounts of data at a fraction of the cost of other models. For example, it can process 6,000 pages of PDFs with better accuracy than other models.

Gemini 2.0 can be used for a variety of real-world tasks, including PDF processing and chatbots

One of the most impressive features of Gemini 2.0 is its ability to process large amounts of data at a fraction of the cost of other models. For example, it can process 6,000 pages of PDFs with better accuracy than other models.

Cost and Accessibility

Gemini 2.0 is significantly cheaper than other models, with some estimates suggesting it is over 90% cheaper

The cost of using Gemini 2.0 is significantly lower than other models, making it more accessible to developers and businesses.

Gemini 2.0 is significantly cheaper than other models, with some estimates suggesting it is over 90% cheaper

The cost of using Gemini 2.0 is significantly lower than other models, making it more accessible to developers and businesses.

Benchmarks and Performance

Gemini 2.0 has been benchmarked against other models, with mixed results

Gemini 2.0 has been benchmarked against other models, with mixed results. While it performs well in some areas, it falls behind in others.

Gemini 2.0 has been benchmarked against other models, with mixed results

Gemini 2.0 has been benchmarked against other models, with mixed results. While it performs well in some areas, it falls behind in others.

Conclusion and Future Developments

The future of Gemini 2.0 and other large language models is exciting and uncertain

The release of Gemini 2.0 is just the beginning of a new era in large language models. As the technology continues to evolve, we can expect to see even more impressive developments in the future.

The future of Gemini 2.0 and other large language models is exciting and uncertain

The release of Gemini 2.0 is just the beginning of a new era in large language models. As the technology continues to evolve, we can expect to see even more impressive developments in the future.

Deploying Your App with Sevalla

Sevalla is a platform that allows you to deploy your app without complexity

Sevalla is a platform that allows you to deploy your app without complexity. With Sevalla, you can deploy entire full-stack applications, databases, and static websites all backed by Google Kubernetes Engine and Cloudflare.

Sevalla is a platform that allows you to deploy your app without complexity

Sevalla is a platform that allows you to deploy your app without complexity. With Sevalla, you can deploy entire full-stack applications, databases, and static websites all backed by Google Kubernetes Engine and Cloudflare.

Final Thoughts

The release of Gemini 2.0 is a significant development in the world of large language models

The release of Gemini 2.0 is a significant development in the world of large language models. With its impressive features and capabilities, it is an exciting time for developers and businesses looking to leverage the power of AI.

The release of Gemini 2.0 is a significant development in the world of large language models

The release of Gemini 2.0 is a significant development in the world of large language models. With its impressive features and capabilities, it is an exciting time for developers and businesses looking to leverage the power of AI.