Introduction to Self-Hosting n8n for Free

Self-hosting n8n can be a cost-effective way to automate workflows without paying for the cloud version. In this article, we will explore how to self-host n8n completely free using npm.

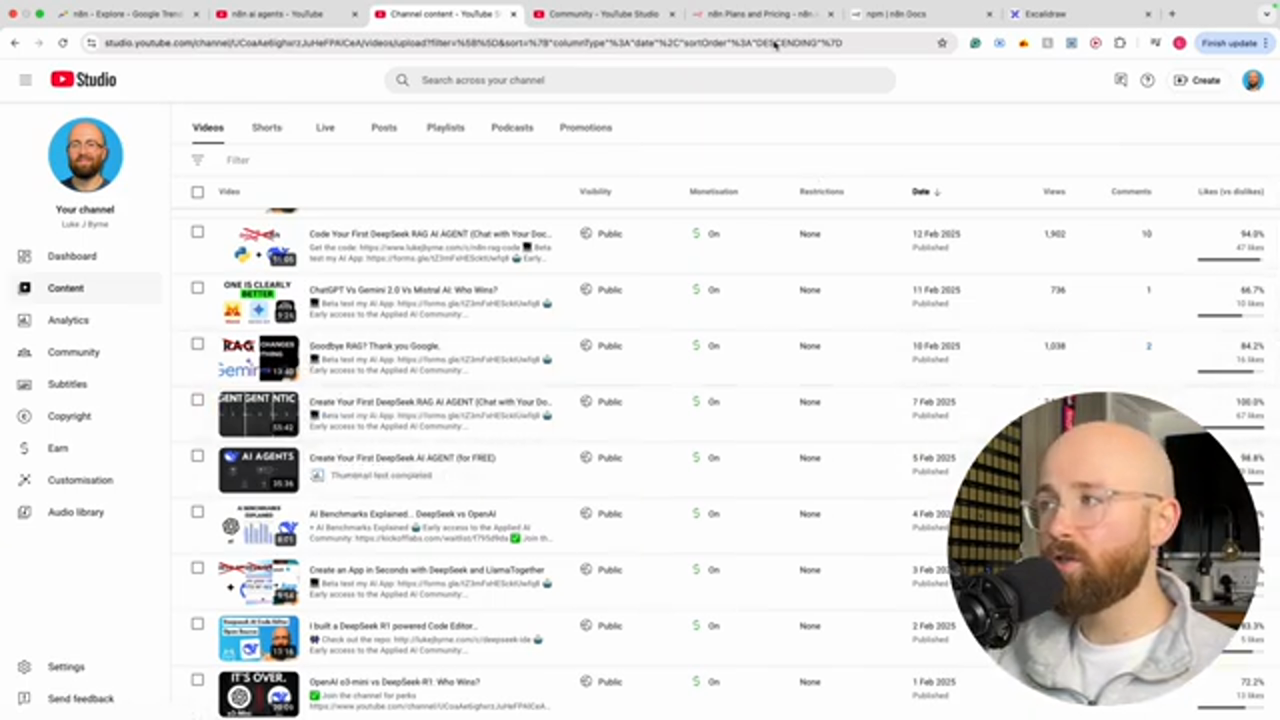

Why n8n is Blowing Up

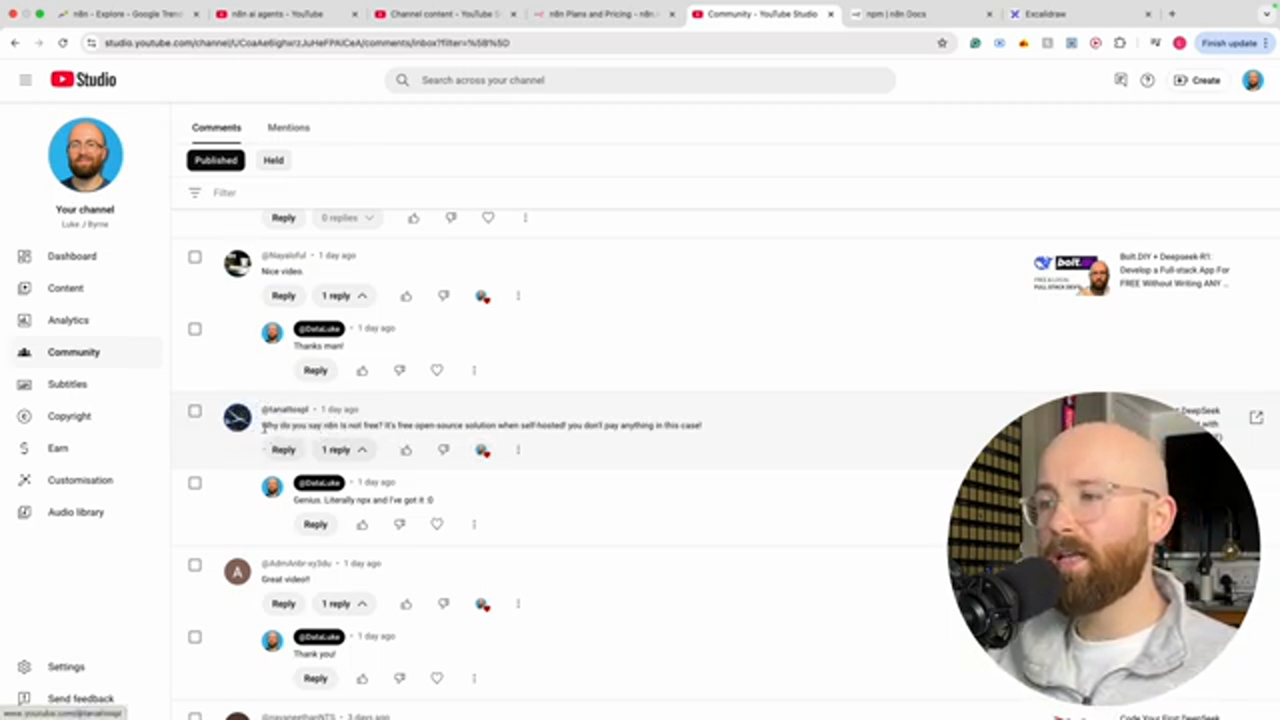

As seen on Google Trends and YouTube, n8n has been gaining popularity. The creator of the video had done a couple of videos on n8n using the free trial version, which has a starter pricing of $20 a month and a pro pricing of $50 a month. However, a commentator pointed out that n8n is not free, but rather a free open-source solution when self-hosted.

This is the caption for the image

This is the caption for the image

How to Self-Host n8n

To self-host n8n, we need to install Node.js and npm. There are two ways to install n8n: using npm or Docker. Docker is used to turn applications into containers, making it easier to manage and deploy applications. However, for this tutorial, we will be using npm, which is a package manager for Node.js.

This is the caption for the image

This is the caption for the image

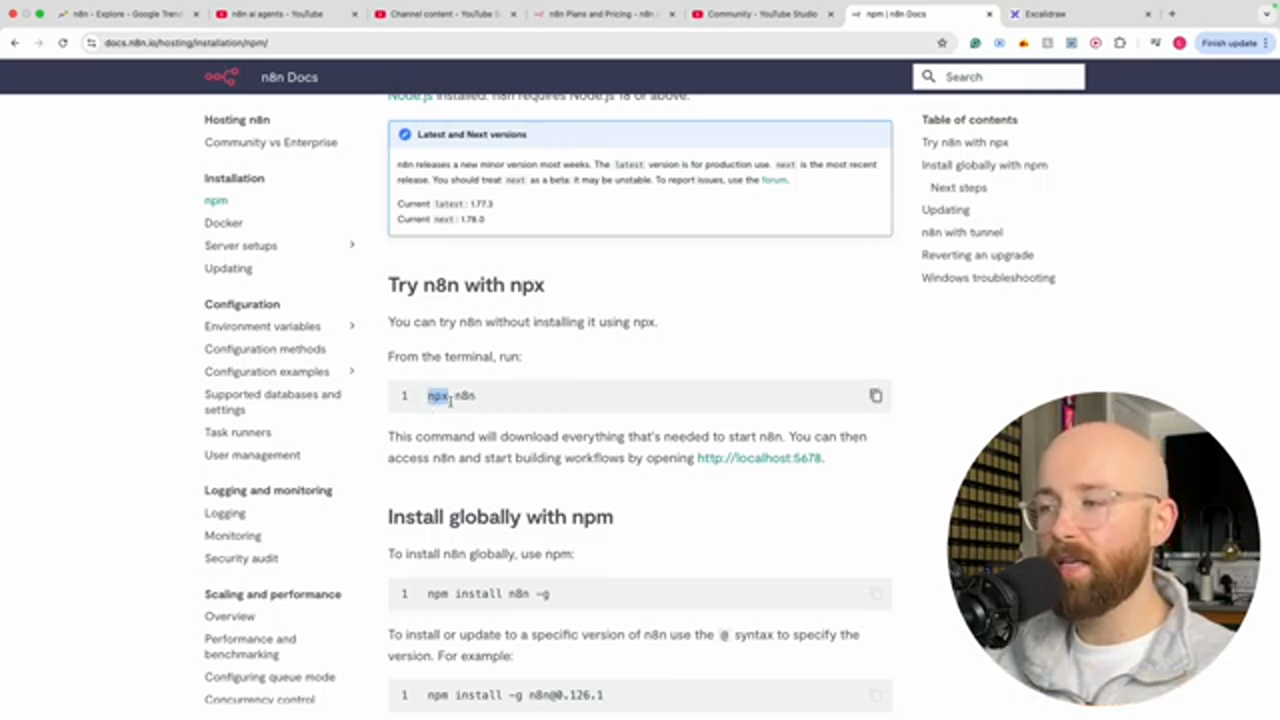

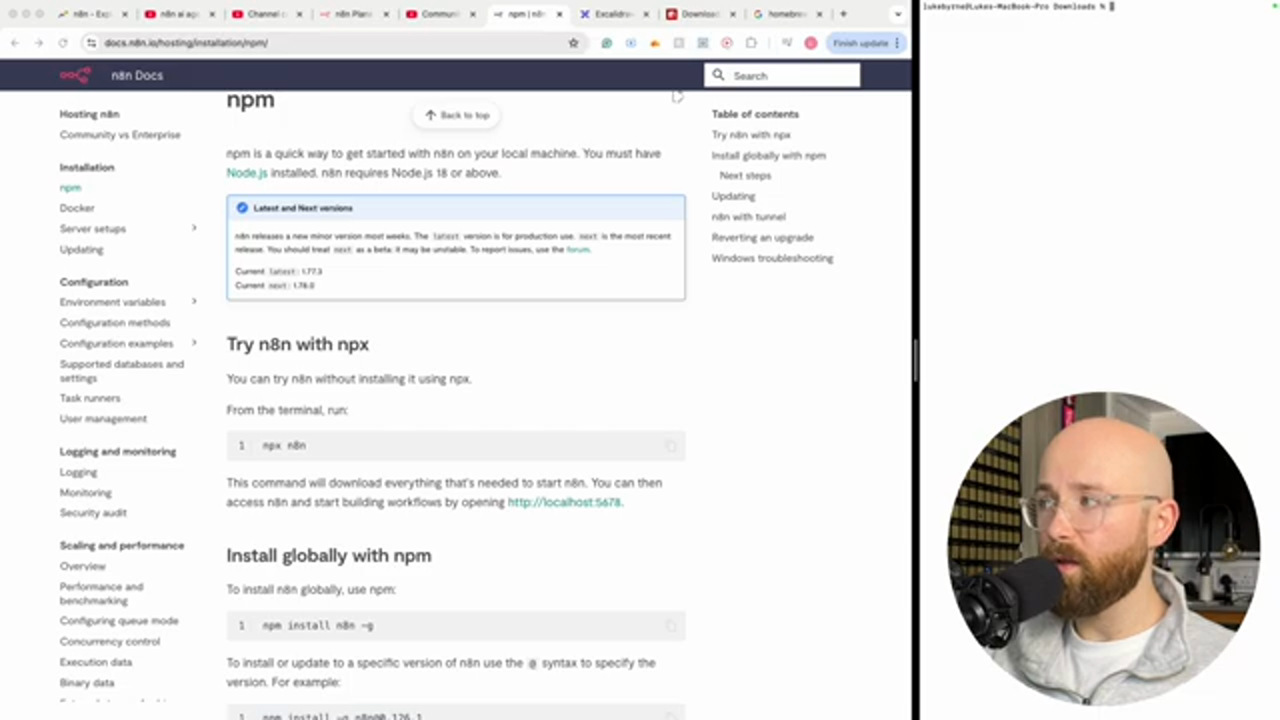

Installing Node.js and npm

To install Node.js and npm, we can use a package manager like Homebrew. Once installed, we can use npm to install n8n. We can use the command npx n8n to run n8n locally.

This is the caption for the image

This is the caption for the image

Running n8n Locally

Once installed, we can run n8n locally by using the command npx n8n. This will start the n8n server, and we can access it by going to http://localhost:5678.

This is the caption for the image

This is the caption for the image

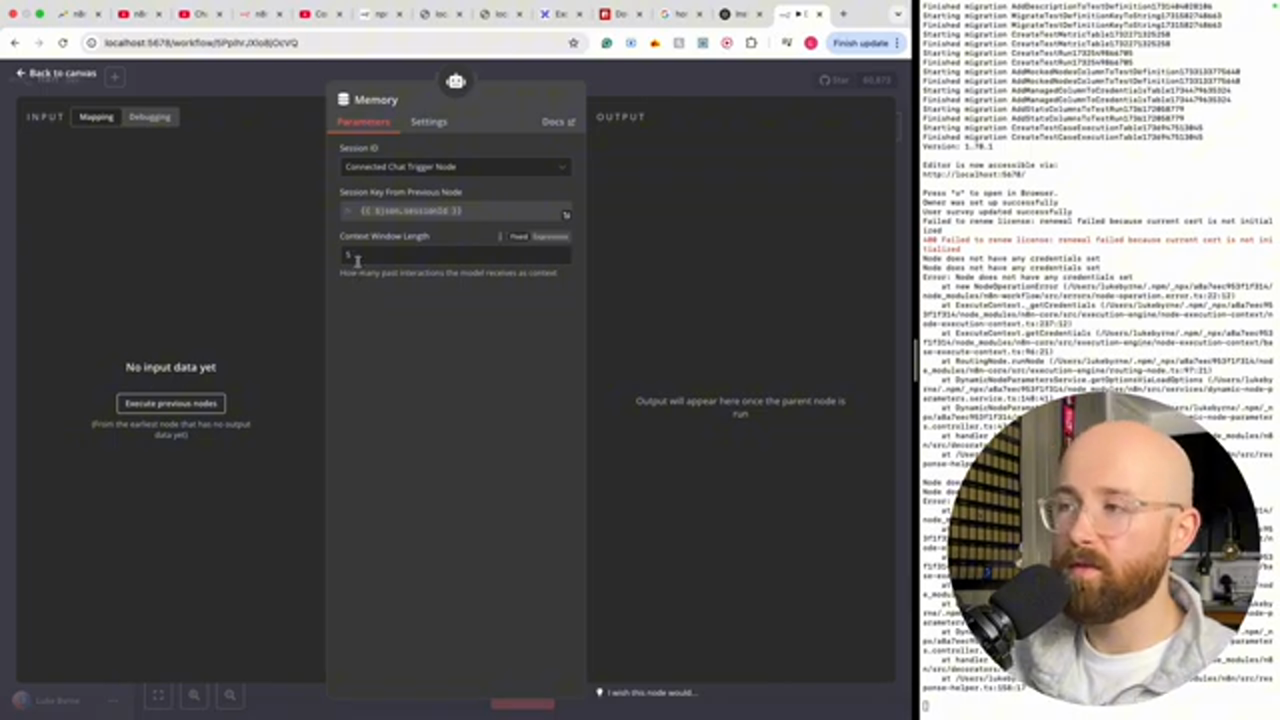

Setting Up n8n

To set up n8n, we need to create an owner account and set up a workflow. We can use the built-in workflow editor to create a new workflow.

This is the caption for the image

This is the caption for the image

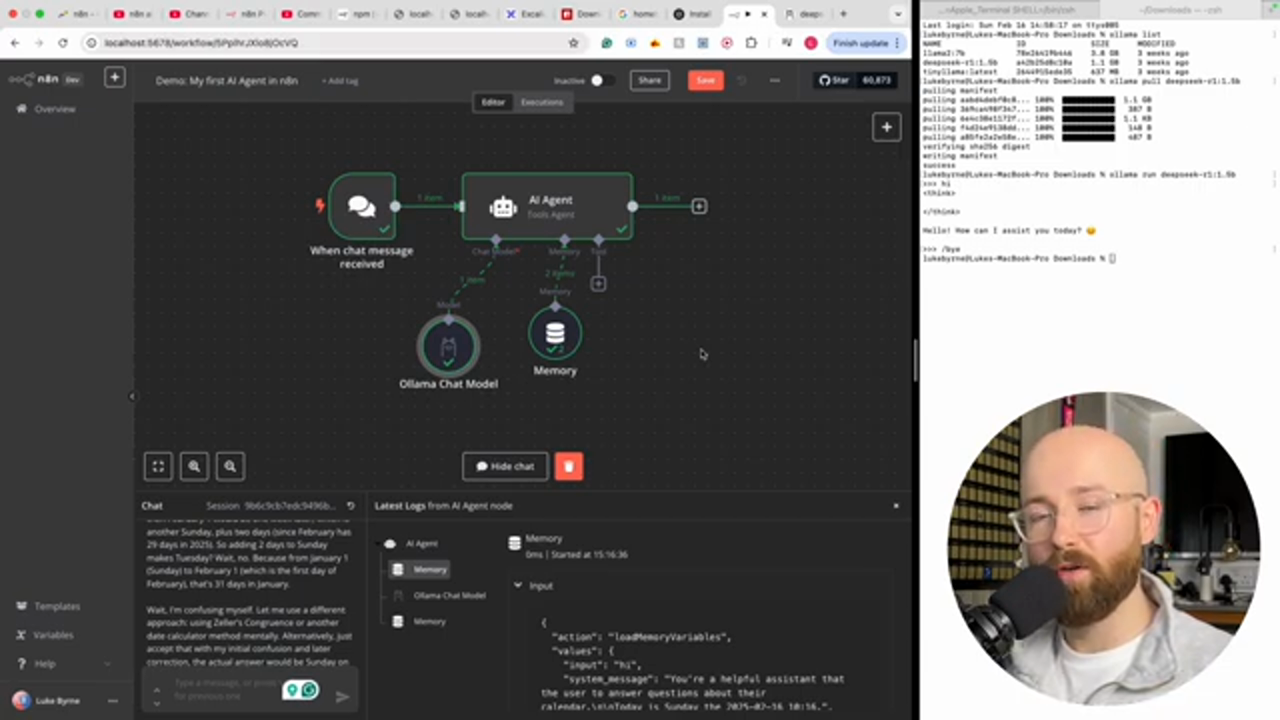

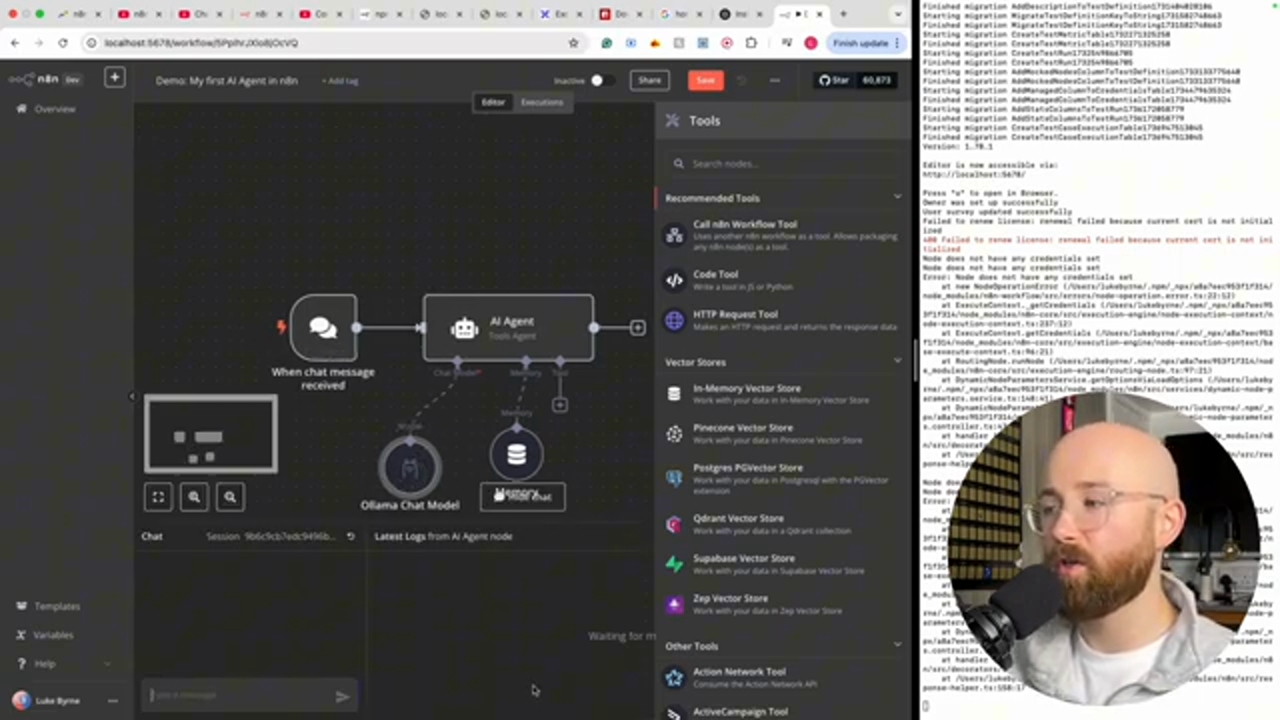

Integrating AI Agents with n8n

We can integrate AI agents with n8n using the built-in workflow editor. We can create a new workflow and add an AI agent to it.

This is the caption for the image

This is the caption for the image

Running LLMs Locally with Ollama

We can run large language models (LLMs) locally using Ollama. Ollama is a way to run language models completely free and easy.

This is the caption for the image

This is the caption for the image

Conclusion

In conclusion, self-hosting n8n can be a cost-effective way to automate workflows without paying for the cloud version. We can use npm to install n8n and run it locally. We can also integrate AI agents with n8n and run LLMs locally using Ollama.

This is the caption for the image

This is the caption for the image

Final Thoughts

Finally, we can use n8n to automate workflows and integrate AI agents with it. We can also run LLMs locally using Ollama.

This is the caption for the image

This is the caption for the image

Next Steps

Next, we can create a community to learn and share knowledge about n8n and AI.

This is the caption for the image

This is the caption for the image

Join the Applied AI Community

We can join the Applied AI Community to learn and share knowledge about n8n and AI.