OpenAI's Nightmare: How DeepSeek R1 is Disrupting the AI Sector

Some short description goes here, OpenAI's dominance in the AI sector is being challenged by a new startup called DeepSeek, which has created a new open weights model called R1 that allegedly beats OpenAI's best models in most metrics.

Introduction to DeepSeek R1

This is the caption for the image 1, OpenAI's Nightmare

DeepSeek R1, a new AI startup run by a Chinese hedge fund, has created a new open weights model called R1 that allegedly beats OpenAI's best models in most metrics. This has sent shockwaves through the AI sector, with many wondering how a relatively small startup could achieve such impressive results.

This is the caption for the image 1, OpenAI's Nightmare

DeepSeek R1, a new AI startup run by a Chinese hedge fund, has created a new open weights model called R1 that allegedly beats OpenAI's best models in most metrics. This has sent shockwaves through the AI sector, with many wondering how a relatively small startup could achieve such impressive results.

What can a Pi 5 do, really?

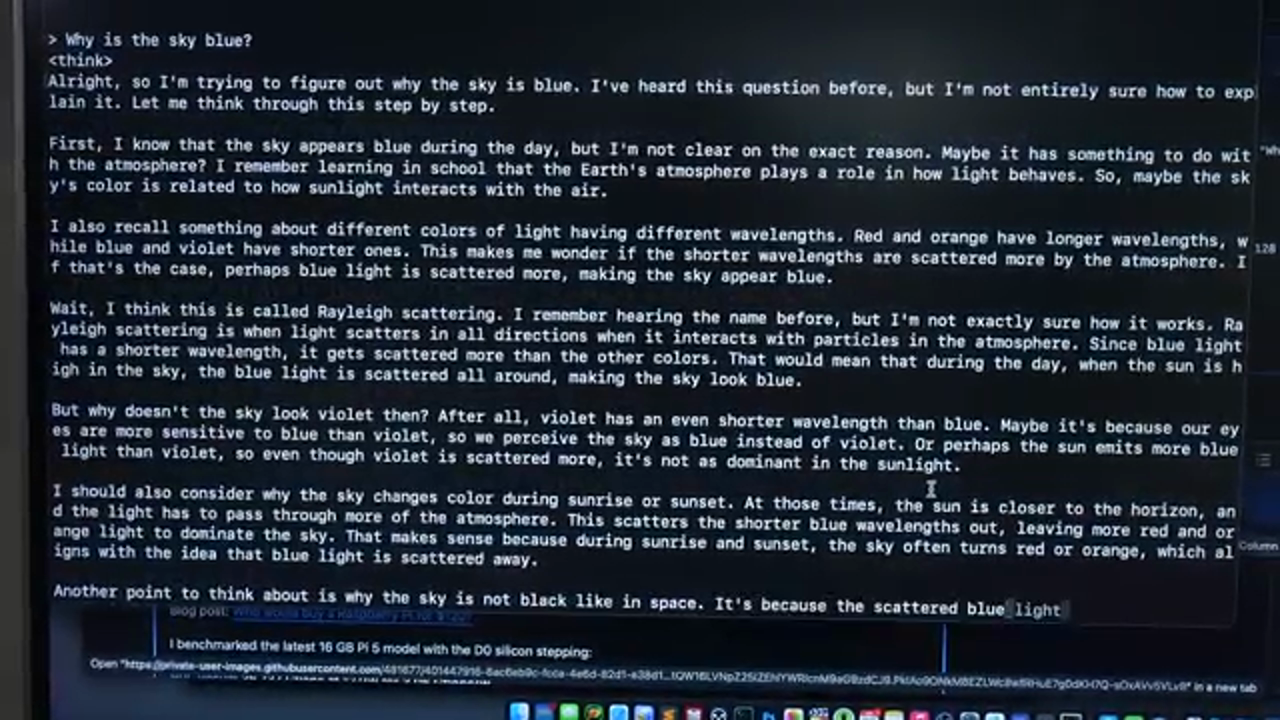

This is the caption for the image 2, Pi 5 Capabilities

The Pi 5, a small and relatively inexpensive computer, can run the DeepSeek R1 model, but only with some limitations. The model can distill other models to make them run better on slower hardware, meaning that a Raspberry Pi can run one of the best local quen AI models.

This is the caption for the image 2, Pi 5 Capabilities

The Pi 5, a small and relatively inexpensive computer, can run the DeepSeek R1 model, but only with some limitations. The model can distill other models to make them run better on slower hardware, meaning that a Raspberry Pi can run one of the best local quen AI models.

Beating OpenAI with 1% of the Resources

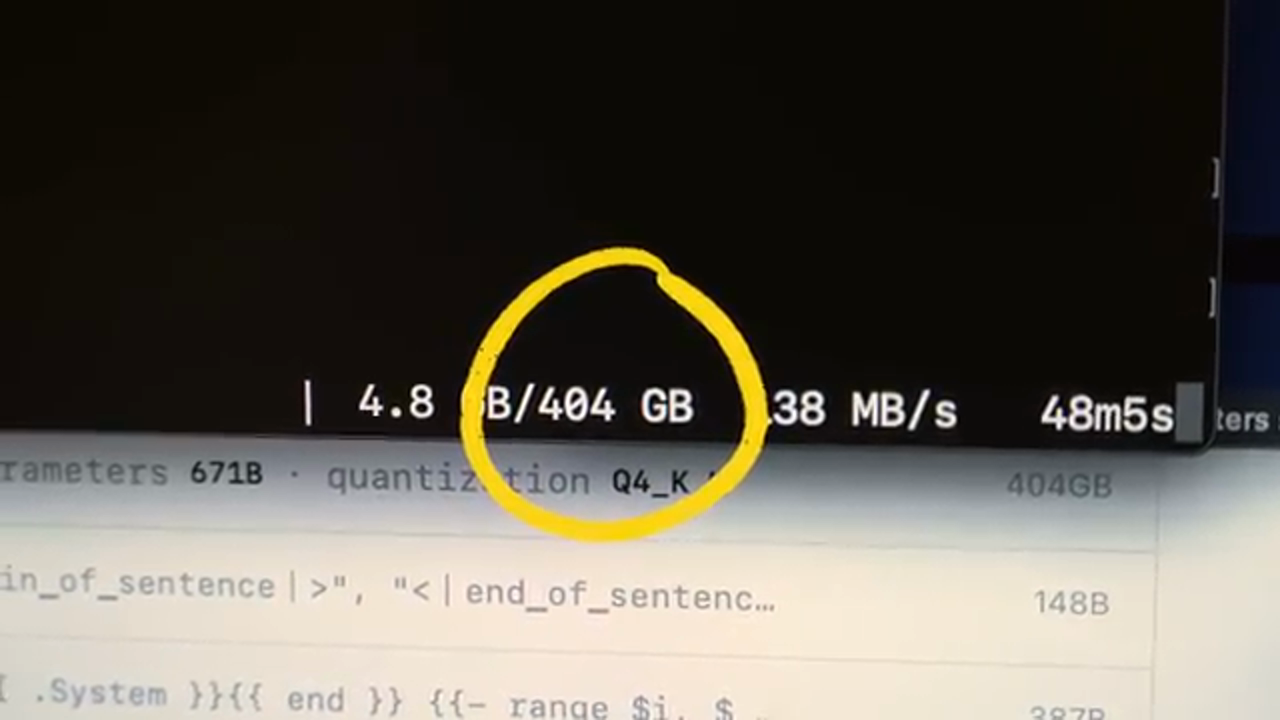

This is the caption for the image 3, DeepSeek R1 671b

DeepSeek's model can beat OpenAI's best models in most metrics, and it did it for $6 million, with GPUs that run at half the memory bandwidth of OpenAI's. This is a significant achievement, as OpenAI's entire business model is predicated on people not having access to the insane energy and GPU resources to train and run massive AI models.

This is the caption for the image 3, DeepSeek R1 671b

DeepSeek's model can beat OpenAI's best models in most metrics, and it did it for $6 million, with GPUs that run at half the memory bandwidth of OpenAI's. This is a significant achievement, as OpenAI's entire business model is predicated on people not having access to the insane energy and GPU resources to train and run massive AI models.

Running DeepSeek R1 on a Raspberry Pi

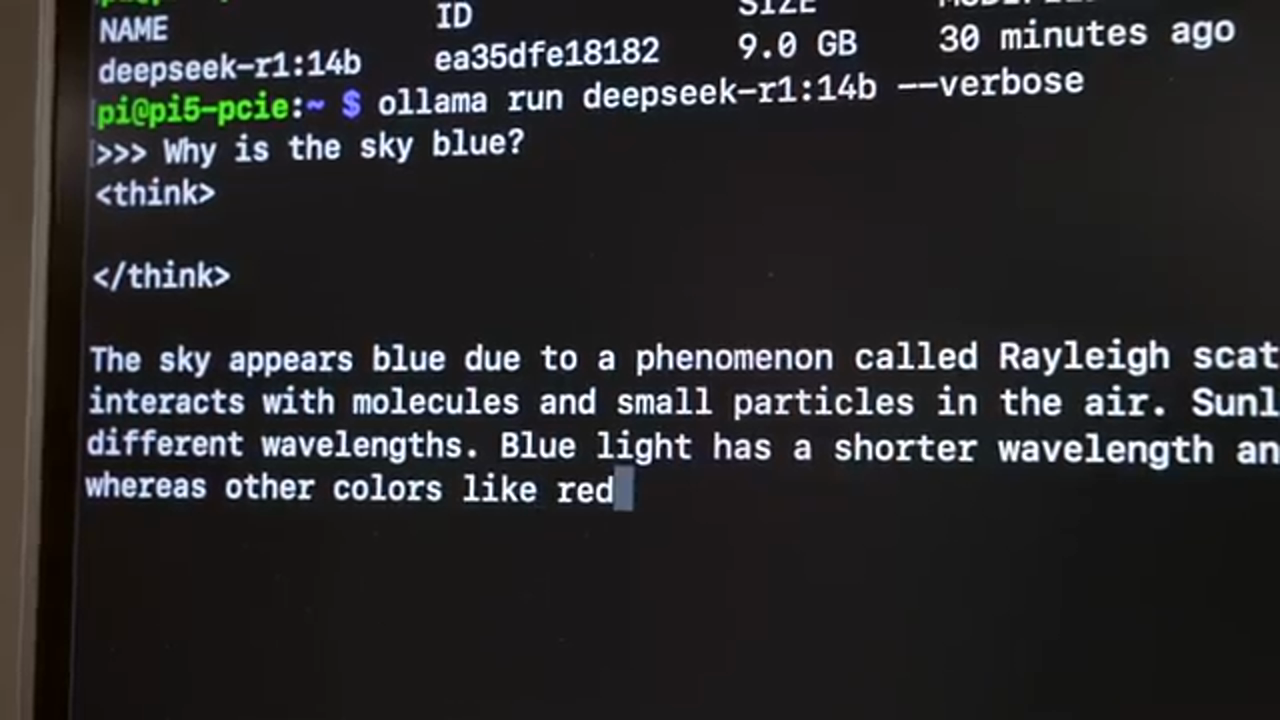

This is the caption for the image 4, Raspberry Pi

While the Raspberry Pi can technically run DeepSeek R1, it's not the same thing as DeepSeek R1 671b, which is a 400 GB model that requires a massive amount of GPU compute. However, the smaller 14b model can run on a Raspberry Pi, albeit slowly, at about 1.2 tokens per second.

This is the caption for the image 4, Raspberry Pi

While the Raspberry Pi can technically run DeepSeek R1, it's not the same thing as DeepSeek R1 671b, which is a 400 GB model that requires a massive amount of GPU compute. However, the smaller 14b model can run on a Raspberry Pi, albeit slowly, at about 1.2 tokens per second.

Speeding up DeepSeek R1 with an External Graphics Card

This is the caption for the image 5, External Graphics Card

To speed up DeepSeek R1, an external graphics card can be used, which can provide a significant boost in performance. With an AMD W7700 graphics card, the model can run at about 20-50 tokens per second, depending on the type of work being done.

This is the caption for the image 5, External Graphics Card

To speed up DeepSeek R1, an external graphics card can be used, which can provide a significant boost in performance. With an AMD W7700 graphics card, the model can run at about 20-50 tokens per second, depending on the type of work being done.

Running DeepSeek R1 on a Server

This is the caption for the image 6, Server

DeepSeek R1 can also be run on a server, which can provide even more impressive performance. With a 192-core server, the model can run at about 4 tokens per second, which is a significant improvement over the Raspberry Pi.

This is the caption for the image 6, Server

DeepSeek R1 can also be run on a server, which can provide even more impressive performance. With a 192-core server, the model can run at about 4 tokens per second, which is a significant improvement over the Raspberry Pi.

GPUs on Raspberry Pi and Other Arm Boards

This is the caption for the image 7, GPUs on Raspberry Pi

There are also options for running GPUs on Raspberry Pi and other Arm boards, which can provide a significant boost in performance. With AMD GPUs working great, and Intel open-source drivers also working, there are many options available for those looking to run AI models on Arm-based devices.

This is the caption for the image 7, GPUs on Raspberry Pi

There are also options for running GPUs on Raspberry Pi and other Arm boards, which can provide a significant boost in performance. With AMD GPUs working great, and Intel open-source drivers also working, there are many options available for those looking to run AI models on Arm-based devices.

Conclusion

AI is still in a massive bubble, with Nvidia losing more than half a trillion dollars in value in one day after DeepSeek was launched. However, this does not mean that AI is not a significant technology, and there are many potential applications for AI models like DeepSeek R1. As the technology continues to evolve, it will be interesting to see how it is used and what potential applications it may have.